Each node provides storage capacity to your cluster. Elasticsearch will stop indexing if the nodes start to fill up. This is controlled with the cluster.routing.allocation.disk.watermark.low parameter. By default, no new shards will be allocated when a machine goes above 85% disk space.

Clearly you must manage the disk space when all of your nodes are running, but what happens when a node fails?

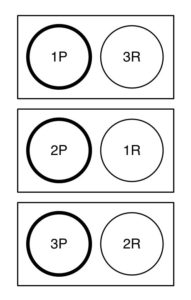

Let’s look at a three-node cluster, setup with three shards and one replica, so data is evenly spread out across the cluster:

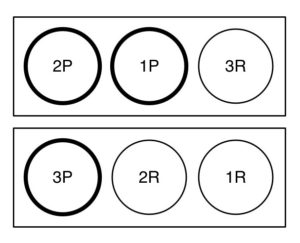

If each node has 1TB of disk space for data, they would hit the per-node 85% limit at 850GB. If one node failed, the 6 total shards would need to be distributed across two nodes. In our example, if we lost node #1, the primary for shard 1 and the replica for shard 3 would be lost. The replica for shard 1 that is on node #2 would be promoted to primary, but we would then have no replica for either shards 1 or 3. Elasticsearch would try to rebuild the replicas on the remaining hosts:

This is good on paper, except each of the remaining two nodes would need to absorb up to 425GB each. The remaining nodes would be full, and no new shards would be created.

To plan for a node outage, you need to have enough free disk space on each node to reallocate the primary and replica data from the dead node.

This formula will yield the maximum amount of data a node can safely hold:

(disk per node * .85) * (node count - 1 / node count)

In my example, we would get:

( 1TB * .85 ) * ( 2 / 3 ) = 566GB

If your three nodes contained 566GB of data each and one node failed, 283GB of data would be rebuilt on the remaining two nodes, putting them at 849GB used space. This is just below the 85% limit of 850GB.

I would pad the number a little, and limit the disk space used to 550GB for each node, with 1.65TB data total across the 3-node cluster. This number plays a part in your data retention policy and cluster sizing strategies.

If 1.65TB is too low, you either need to add more space to each node, or add more nodes to the cluster. If you added a 4th similarly-sized node, you’d get

( 1TB * .85 ) * ( 3 /4 ) = 637GB

which would allow 2.5GB of storage across the entire cluster.

The formula shown is based on one replica shard. If you had configured your cluster with more replicas (to survive the outage of more than one node), note that the formula is really:

(space per node * .85) * ((node count - replica count) / node count)

If we had two replicas in our example, we’d get:

( 1TB * .85 ) * ( 1 / 3 ) = 283GB

So you would only allow 283GB of data per node if you wanted to survive a 2-node outage in a 3-node cluster.