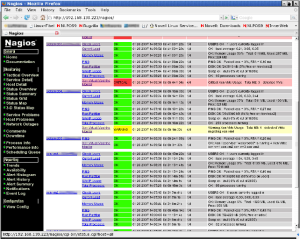

A client has some code that is instrumented with a New Relic agent. We wanted to track the performance of individual portions of the code – mostly dependencies on other services like databases and third-party data sources. Rather than have yet another alerting platform, we wanted to pull the information into Nagios. Fortunately, New Relic offers an API that’s pretty easy to use.

The first step is to enable API access in your New Relic account and get the API key. According to the NR doc, the steps are:

- Sign in to the New Relic user interface.

- Select (account name) > Account settings > Integrations > Data sharing > API access.

- Click Enable API Access, and then copy or make a note of your API key.

Once you have the API key, the first request you’ll want to make is to get your account_id. Try this:

curl -gH "x-api-key:YOUR_API_KEY" 'https://rpm.newrelic.com/api/v1/accounts.xml'

Note that the account_id also appears in the page urls when you’re logged in to the new relic website.

With that done, there are only a handful of URLs that you might want to hit. New Relic breaks things down by application, so you’ll need a list of those:

curl -gH "x-api-key:YOUR_API_KEY" 'https://rpm.newrelic.com/api/v1/accounts/YOUR_ACCOUNT_ID/applications.xml'

The results of that call will not only give you the IDs for each application, but also links to the Overview and Servers pages. Again note that the application ID appears in the page urls when you’re on the new relic website.

Grab a list of the metrics that are available for the application:

curl -gH "x-api-key:YOUR_API_KEY" 'https://api.newrelic.com/api/v1/accounts/YOUR_ACCOUNT_ID/applications/YOUR_APPLICATION_ID/metrics.xml'

And finally pull the a statistic for that metric:

curl -gH "x-api-key:YOUR_API_KEY" 'https://api.newrelic.com/api/v1/accounts/YOUR_ACCOUNT_ID/applications/YOUR_APPLICATION_ID/data.xml?metrics[]=YOUR_METRIC_NAME&field=call_count&begin=2013-11-14T00:00:00&end=2013-11-14T23:59:59&summary=1'

This will return xml-formatted data for that metric for a single day. With “summary=1”, you get only one row returned. To get smaller buckets throughout the day, leave “summary” off.

In a quick scan, we didn’t find a way to get more than one metric value per call, so we make multiple calls to get what we need

Note that you can use “data.json” or “data.csv” to have the data returned in different formats. We used xml during manual development and then switched to json when we started writing the nagios plugin.

We now use this plugin check over 50 metrics every three minutes for the client, pulling the average_response_time, max_response_time, and call_count.